Here's a statistic that should change how you think about AI investment: Gartner predicts that through 2026, organizations will abandon 60% of AI projects that lack AI-ready data. Not 60% of projects with bad algorithms or wrong models. Sixty percent fail because the data wasn't ready.

A Gartner survey of data management leaders found that 63% of organizations either don't have or aren't sure they have the right data management practices for AI. That's nearly two-thirds of companies investing in AI without a foundation to support it.

If you're evaluating custom AI development for your business, data readiness is the single biggest factor that will determine whether you see real ROI from AI agents or join the majority that quietly shelves the project after burning through budget. This article explains what data readiness actually means, how to assess yours, and what to fix before writing a single line of AI code.

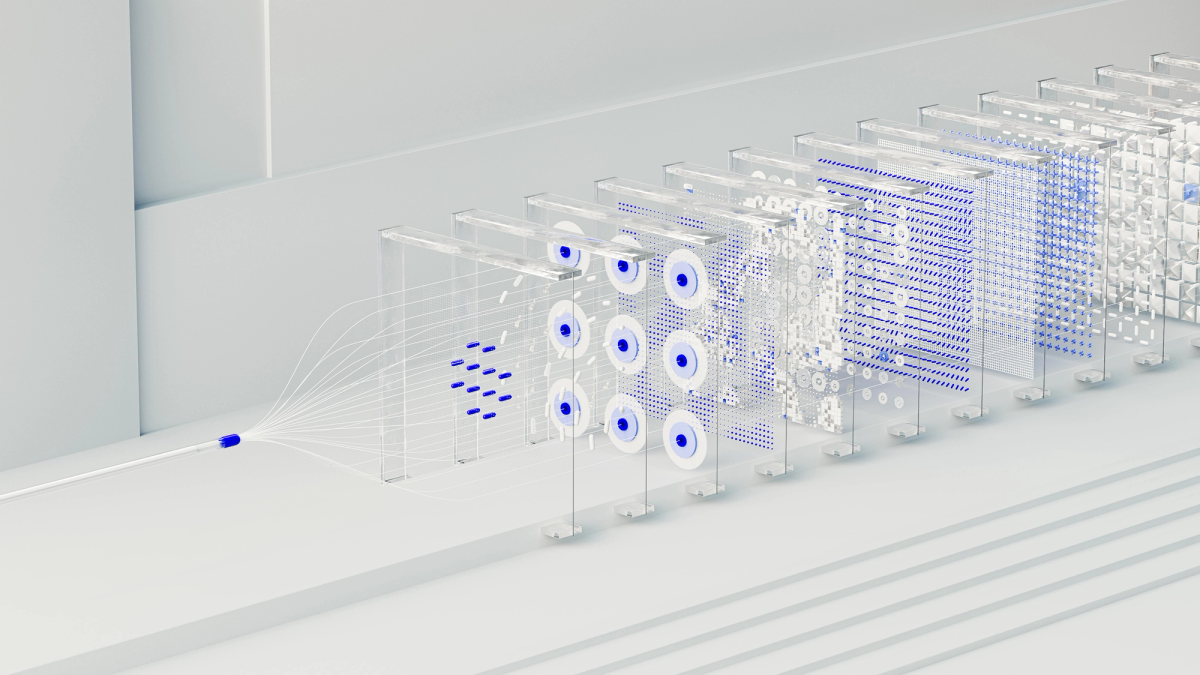

Why data, not models, determines AI success

The AI industry has a narrative problem. Most of the attention goes to models: which LLM is fastest, which architecture is newest, which framework is trendiest. But in practice, the model is rarely what makes or breaks an enterprise AI project. The data is.

The research is consistent across sources:

- RAND Corporation reports an 80.3% overall AI project failure rate, with data quality cited as the top technical cause

- MIT Project NANDA found that 95% of generative AI deployments produced zero measurable return on investment

- Gartner predicts 60% of AI projects without AI-ready data will be abandoned through 2026

- Organizations that conduct formal data readiness assessments see a 2.6x improvement in AI project success rates

The pattern is clear. Companies that treat data preparation as a prerequisite rather than an afterthought are dramatically more likely to succeed. The ones that skip it and go straight to model building almost always pay for it later.

What "AI-ready data" actually means

"AI-ready" isn't a marketing term. Gartner defines it specifically as data that is aligned to specific use cases, actively governed at the asset level, supported by automated pipelines with quality gates, managed through live metadata, and continuously quality-assured. The word "continuously" is where most organizations fall short.

Traditional data management works on reporting cadences: quarterly audits, annual governance reviews, monthly pipeline checks. AI models in production need data quality signals measured in hours. That mismatch is where most problems start.

In practical terms, data readiness for custom AI development breaks down into five dimensions:

- Accessibility — Can the AI system actually reach the data it needs? If your customer records live in Salesforce, your orders in an ERP, and your support tickets in Zendesk, the AI agent needs reliable access to all three. Data locked in silos or behind manual export processes isn't ready.

- Quality — How complete, accurate, and consistent is the data? Missing fields, duplicate records, conflicting formats between systems, and stale information all degrade AI performance. If 15% of your customer records have missing email addresses, any AI agent that relies on email communication will fail 15% of the time.

- Structure — Is the data organized in a way AI can consume? Unstructured notes, inconsistent naming conventions, free-text fields where there should be categories. These aren't just messy. They actively confuse AI models and produce unreliable outputs.

- Governance — Who owns the data? Who can access it? What compliance requirements apply? Are there retention policies, privacy constraints, or regulatory rules that limit how data can be used for training or inference? Governance gaps don't just create legal risk. They stall projects when someone finally asks "are we allowed to use this data?" midway through development.

- Volume and velocity — Is there enough data to train or fine-tune a model? Is new data flowing in fast enough to keep the model current? Some use cases need thousands of examples. Others work with smaller datasets. The key question is whether you have enough representative data for your specific AI use case.

The data readiness assessment: a practical checklist

Before starting any custom AI development project, run through these questions. If you can't answer most of them confidently, you have data work to do before the AI work begins.

Data inventory and access:

- Can you list every system that holds data relevant to your target AI use case?

- Can those systems be accessed via API, or does getting data out require manual exports?

- Is there a single source of truth for key entities (customers, orders, products), or do multiple systems hold conflicting versions?

Data quality:

- What percentage of records in your key datasets are complete (no missing critical fields)?

- When was the last time someone audited data quality across systems?

- Are there known duplicate, outdated, or inconsistent records that haven't been cleaned up?

Governance and compliance:

- Do you have documented data ownership for each system?

- Have legal and compliance teams reviewed whether the data can be used for AI training and inference?

- Are there GDPR, HIPAA, or industry-specific constraints that affect what data can be processed by an AI system?

If you scored poorly on more than a third of these questions, that's not a reason to abandon your AI plans. It's a reason to invest in data preparation before committing to model development. The companies that address data readiness first see 2.6x better success rates on their AI projects.

Common data problems that kill AI projects

When we work with companies on custom AI development, certain data problems show up again and again. Recognizing them early saves months of wasted development time. Many of these overlap with the signs that a business is ready for AI automation, but from the data perspective.

Siloed systems are the most common. Sales data in one platform, support data in another, operations data in a third, and no reliable way to connect a customer record across all three. An AI agent that's supposed to provide a unified customer view can't do that if the underlying data is fragmented.

Inconsistent formatting sounds minor but compounds fast. Date fields stored as text strings in one system and timestamps in another. Product names spelled differently across databases. Customer IDs that don't match between CRM and billing. Every inconsistency is a potential failure point for an AI model trying to make sense of your data.

Historical data gaps create blind spots. If you want an AI agent to handle support ticket routing, it needs enough historical tickets with correct categorization and resolution data to learn from. If your ticketing system only has six months of clean data, or if categories were changed halfway through, the training dataset may not be sufficient.

No data pipeline infrastructure means every data movement is manual. Someone exports a CSV, emails it, and another person imports it. For traditional reporting that's slow but workable. For AI systems that need fresh data flowing continuously, it's a non-starter. You need automated pipelines with quality gates before an AI agent can operate reliably.

Traditional data management vs. AI-ready data

The gap between traditional data practices and what AI requires trips up a lot of organizations that consider themselves "data mature." Having dashboards, a data warehouse, and a BI team doesn't mean your data is AI-ready.

Here's where the differences matter most. Traditional data management focuses on periodic reporting: batch-processed data, monthly audits, manual quality checks, and metadata stored in documentation. AI-ready data management demands real-time or near-real-time data flows, continuous automated quality monitoring, active metadata that drives decisions, and data pipelines with built-in validation gates.

In traditional systems, a 5% error rate in a dataset might be acceptable because humans reviewing reports can spot anomalies. In an AI system, that 5% error rate compounds across thousands of automated decisions, producing results that look plausible but are systematically wrong. The tolerance for data quality issues drops significantly when you hand decision-making to a machine.

How to get your data ready: a phased approach

Data readiness doesn't mean perfection. It means "good enough for your specific use case, with a plan to improve." Here's the sequence that works.

- Pick one AI use case and map its data dependencies. Don't try to make all your data AI-ready at once. Identify the specific use case with the highest ROI potential, then work backward to understand exactly which data it needs, from which systems, at what freshness, and at what quality level.

- Audit the data that matters for that use case. Run a focused audit on completeness, accuracy, consistency, and accessibility. Score each data source on a simple scale. This doesn't need to be a months-long project. A thorough audit of 3 to 5 core data sources can be done in 1 to 2 weeks.

- Fix the critical gaps. Not everything needs to be perfect. Focus on the issues that would directly prevent the AI from working: inaccessible systems, missing fields in critical datasets, format inconsistencies that prevent records from being matched across sources. The rest can be improved iteratively.

- Build the minimum pipeline infrastructure. Your AI agent needs data flowing to it automatically. At minimum, this means API connections to your core systems, a data transformation layer that normalizes formats, and basic quality validation before data reaches the model. This doesn't require a massive data engineering project. Modern integration tools make it possible to stand up basic pipelines in weeks, not months.

- Establish governance for AI-specific data use. Document what data the AI can access, who approved it, what compliance requirements apply, and how data quality will be monitored on an ongoing basis. This doesn't need to be bureaucratic. A clear, lightweight governance framework that everyone follows beats a comprehensive policy that nobody reads.

What this means for custom AI development

If you're considering custom AI development, data readiness should be part of your evaluation criteria when choosing an AI development company. A good development partner will assess your data readiness before proposing a solution. A bad one will skip straight to architecture diagrams and pricing.

The build vs. buy decision for AI is also directly affected by data readiness. Off-the-shelf AI tools assume your data is already clean and accessible. Custom solutions can include data preparation as part of the engagement, which is more expensive upfront but far less expensive than building on a bad foundation and rebuilding six months later.

The companies that get the best results from custom AI development are the ones that treat data preparation as phase one of the project, not a separate initiative they'll get to later. When data readiness and AI development are handled by the same team, the feedback loop is tight: the engineers building the model can flag data issues immediately, and the data work is guided by what the model actually needs.

How Attract Group handles data readiness

Every custom AI development project we take on at Attract Group starts with a data readiness assessment. Before we quote timelines or costs, we map your data landscape: what systems hold the data, how it flows between them, where the quality gaps are, and what needs to change before an AI agent can operate reliably. Our AI agent development services include data preparation as a standard phase, not an optional add-on.

We've seen too many projects fail because a vendor built a technically impressive agent on top of a data foundation that couldn't support it. We'd rather tell you upfront that your data needs work and help you fix it than deliver an agent that looks good in a demo and falls apart in production.

If you're planning a custom AI project and aren't sure whether your data is ready, that's the right starting point for a conversation. We'll give you an honest assessment and a practical path forward.